How to Count Tokens Effectively

Posted on April 1, 2025 • 3 min read • 551 wordsWhen using ChatGPT or any OpenAI API, one key constraint is the token limit. But what exactly is a token? How are they counted? And why is it crucial to manage them efficiently?

I. What Is a Token?

A token is a unit of text that the model processes. It could be a full word, part of a word, or even a special character.

Concrete Examples

| Text | Number of Tokens |

|---|---|

| Hello | 1 |

| I am a developer | 4 |

| Artificial intelligence is fascinating! | 5 |

| GPT is a powerful model. | 6 |

Specifics of Tokenization

- In English, short words are often 1 token (e.g.,

"Hello"= 1 token). - In French and other languages, longer words can be split into multiple tokens (e.g.,

"développeur"or"intelligence"= 2 tokens). - Punctuation also counts as tokens.

- Spaces are included with the following word.

- Acronyms are usually treated as 1 token.

On average, 100 tokens correspond to roughly 75 words, though this can vary depending on the language and writing style.

Fun fact: French text usually uses more tokens than English due to linguistic structure.

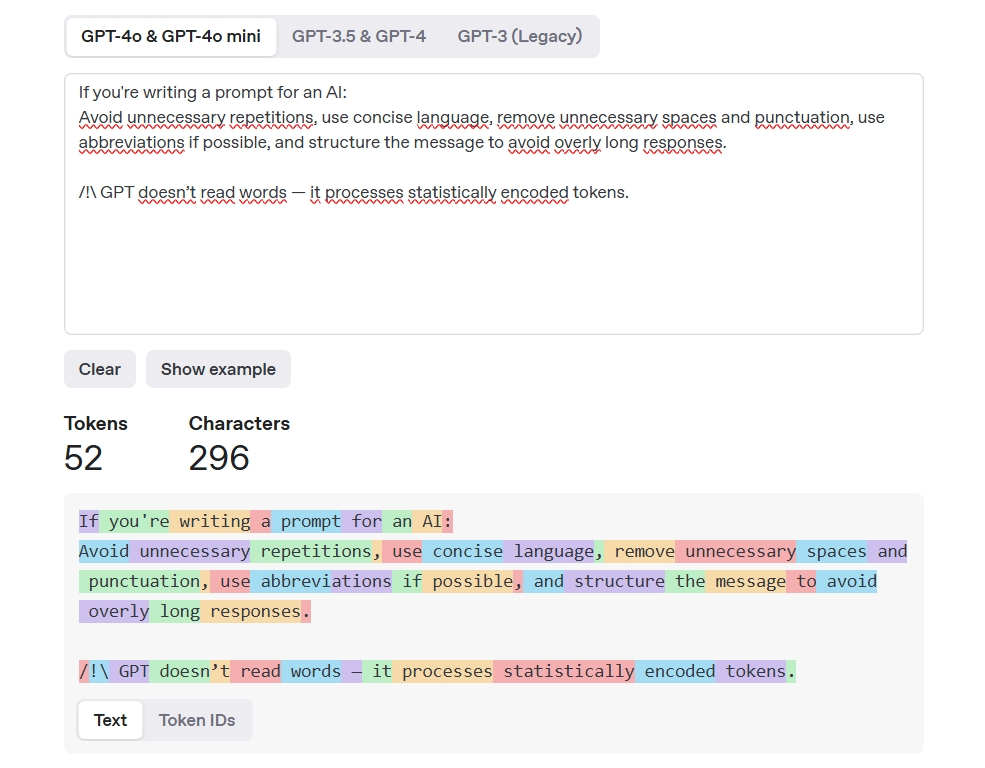

Example using the OpenAI tokenizer

II. Why Is Counting Tokens Important?

1. Reducing Usage Costs

The OpenAI API charges based on token usage. So it’s wise to optimize!

2. Preventing API Errors

Each model has a maximum token limit:

- GPT-4 Turbo: 128K tokens

- GPT-4: 8K tokens

- GPT-3.5 Turbo: 16K tokens

- o3-mini: 128K tokens

If you exceed the limit, your request may be truncated or rejected.

III. Prompt Engineering Optimization

- Write prompts as concise as possible.

- Remove unnecessary repetition.

- Avoid verbose or redundant phrases.

IV. GPT Model Comparison & Token Limits

Here’s a comparison of the main models, their performance, token limits, and pricing:

| Model | Max Tokens (input + output) | Performance | Use Cases | Pricing (per million tokens) |

|---|---|---|---|---|

| GPT-4o | 128K | Multimodal (text, image, audio) model | Applications requiring multimodal understanding | Input: $2.50Output: $10.00 |

| GPT-4o mini | 128K | Lighter, cost-effective version of GPT-4o | High-volume API usage in services | Input: $0.15Output: $0.60 |

| o3-mini | 128K | Fast, cost-efficient reasoning-focused model | Tasks requiring quick, efficient reasoning | Free in ChatGPT, paid via API |

| GPT-3.5 Turbo | 16K | Balanced cost-performance | Interactive apps, chatbots, summaries | Input: $0.60Output: $2.40 |

Notes:

- o3-mini is free in ChatGPT (with limitations), but paid via API.

- More tokens = better context understanding in long conversations.

- Pricing is higher for advanced models like GPT-4o.

V. Best Practices to Optimize Token Usage

To avoid exceeding your budget or token limits, follow these tips:

1. Avoid redundant wording

❌ Bad: "Can you give me a response on this topic and explain it in detail?"

✅ Good: "Can you explain this topic?"

2. Be concise

❌ Bad: "I’d like to know if you could summarize this text and also tell me how many tokens it contains."

✅ Good: "Please summarize this text and count the tokens."

3. Remove extra spaces and punctuation

An extra space or newline can cost tokens!

4. Use abbreviations when appropriate

If the context allows it, shorten your language to reduce token count.

5. Limit response length explicitly

When prompting an AI, you can specify output length:

"Summarize in 50 words.""Give me 3 key points."

Conclusion

- Tokens are the fundamental unit of text for ChatGPT and directly affect both cost and request limits.

- Several tools exist to count tokens, like tiktoken in Python or the OpenAI Tokenizer tool.

- It’s essential to optimize your prompt design to save tokens and ensure high-quality, cost-effective responses.